Roughly 12 percent of Horizon Europe proposals submitted in 2025 will receive funding, and the European Commission's own interim evaluation concedes that close to seven out of ten high-quality applications fail not because they lack merit, but because the programme is short roughly EUR 82 billion of what it would take to fund everything scoring above the quality threshold. This is the brutal arithmetic that frames every conversation about EU research and innovation grants, and it has a useful corollary for anyone preparing a proposal: passing the threshold is necessary but no longer sufficient. The proposals that actually get signed are not simply the ones that avoid mistakes. They are the ones whose structure, language, and internal logic make the evaluator's job easy, fast, and free of doubt.

This piece walks through the real anatomy of a funded Horizon Europe proposal under the 2026 to 2027 work programmes, section by section, and identifies the structural choices that separate the funded from the merely fundable.

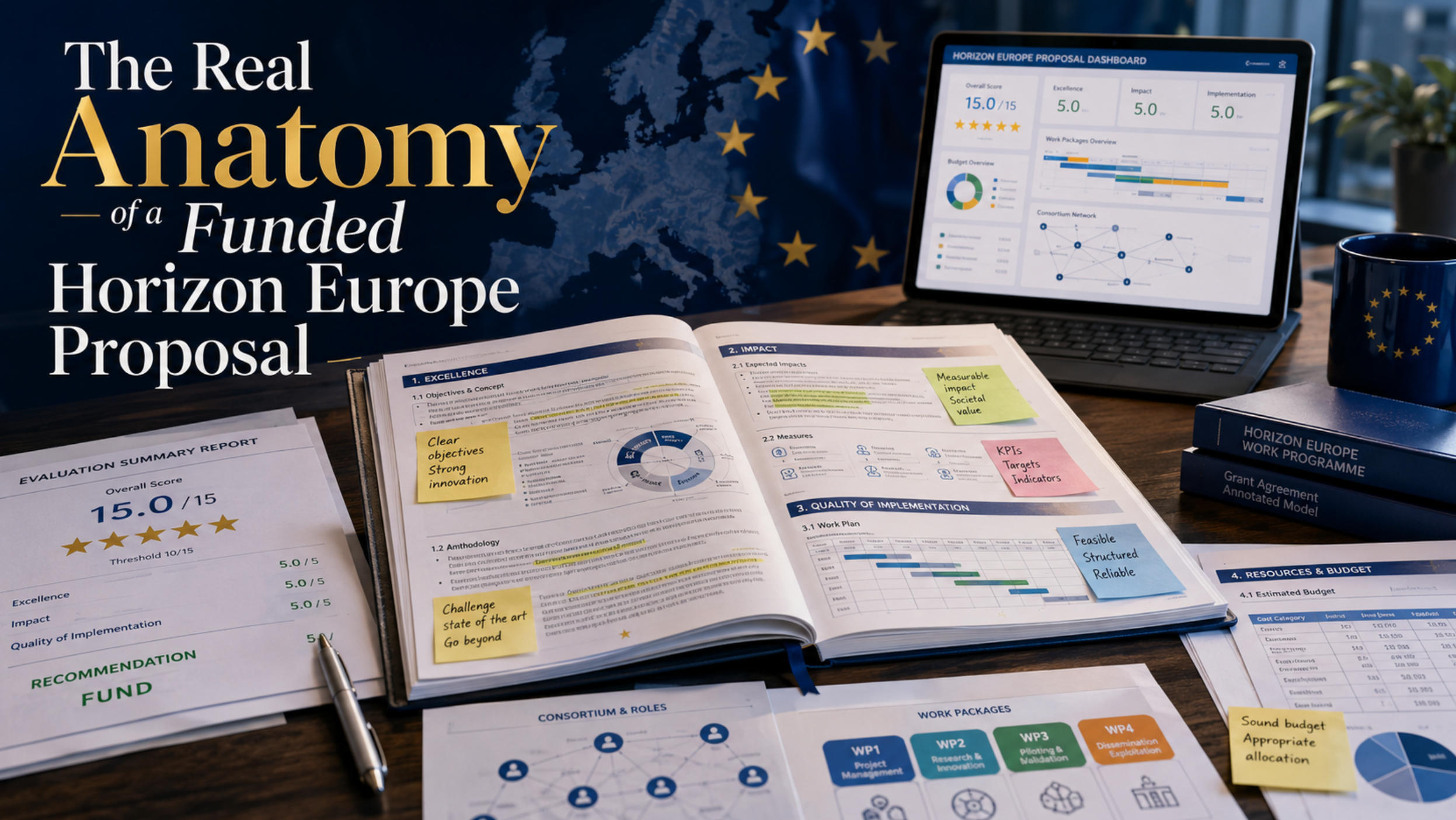

The Architecture: Part A, Part B, and the Three Criteria

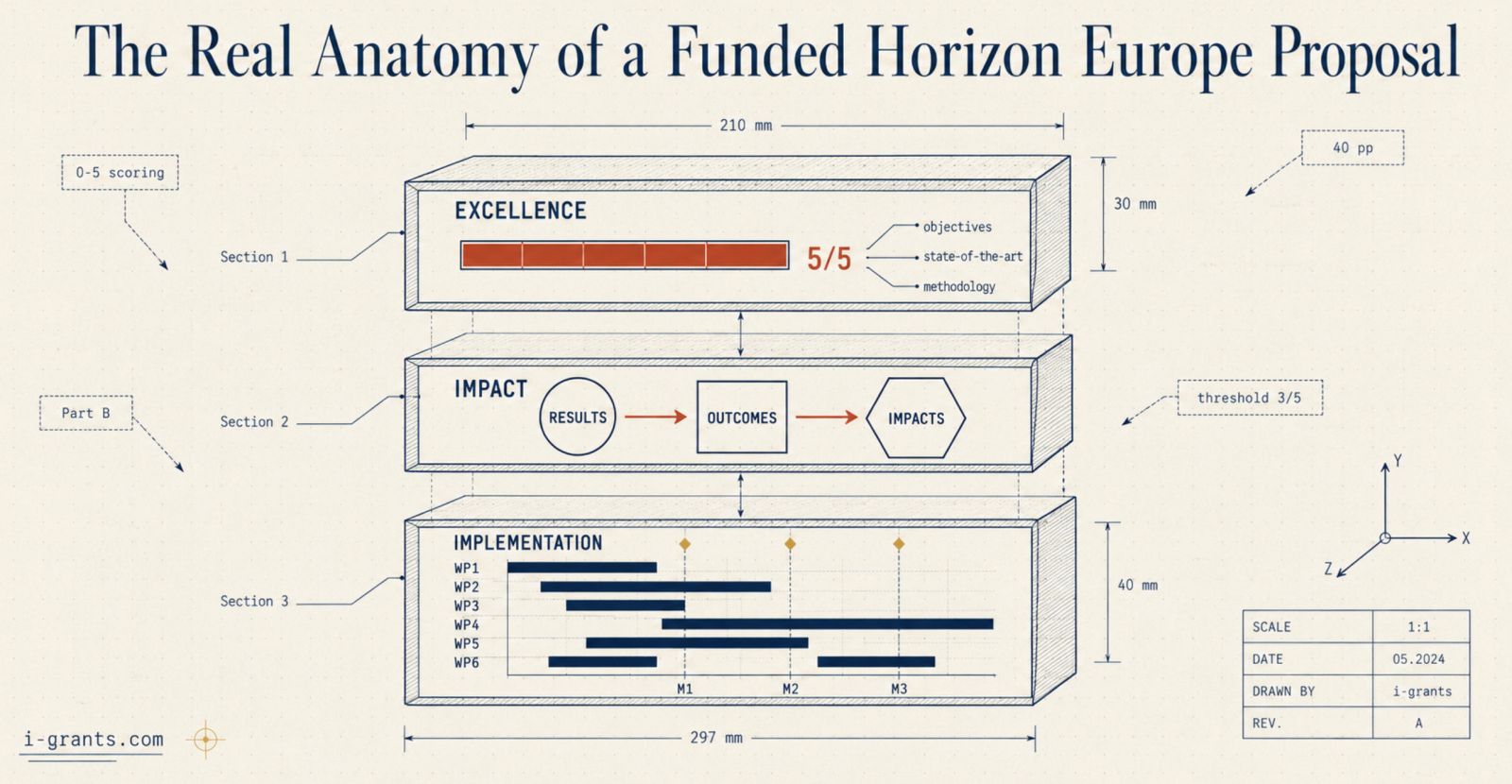

A Horizon Europe proposal has two parts and only two. Part A is the administrative forms generated by the Funding and Tenders Portal: title, abstract, partner identifiers, budget tables, ethics self-assessment, security self-assessment, declarations. Most of Part A is structured data, and little of it can be rewritten persuasively. Part B is the PDF narrative, and it is where the proposal actually wins or loses. Under the revised 2026 to 2027 templates issued by the European Commission in December 2025, Part B for Research and Innovation Actions and Innovation Actions is capped at 40 pages, or 45 if the topic uses lump sum funding. Coordination and Support Actions are capped at 25 pages. Anything beyond the limit is disregarded by evaluators; truncation is automatic, not negotiable.

Part B is divided into three sections that map one to one onto the three evaluation criteria: Excellence, Impact, and Quality and Efficiency of Implementation. Each criterion is scored from 0 to 5 by independent expert evaluators, with a threshold of 3 out of 5 on each section and a minimum total of 10 out of 15. The three criteria carry equal weight for Research and Innovation Actions, but for Innovation Actions the Impact criterion receives a 1.5 weighting in the ranking formula, which means that for higher Technology Readiness Level work, the second section is genuinely the most important page of the entire document.

The structure of Part B is fixed by template and may not be reordered or renamed. What can change is what fills each subsection, and that is where funded proposals separate from rejected ones.

Section 1, Excellence: The Difference Between Interesting and Ambitious

The Excellence section asks three things in sequence: what the project is trying to achieve, why it is ambitious in light of the current state of the art, and how methodologically sound the approach is. Reviewers see hundreds of "we propose a novel method to address an important challenge" openings and treat them as background noise. A funded Excellence section opens with a specific, falsifiable claim. Not "we will improve diagnostic accuracy in oncology imaging," but something closer to: "we will demonstrate that a federated learning architecture trained across seven European hospital networks can reduce false negative rates in early-stage pancreatic cancer screening from 31 percent (current standard) to below 15 percent on an independently held test set of 12,000 anonymised scans, raising the project from TRL 4 to TRL 6 within 42 months."

That sentence does several things at once. It states what will be true at the end. It names the metric. It quantifies the baseline. It declares the TRL trajectory. It implies the scale of evidence required for proof. Evaluators scoring this paragraph against the Excellence rubric have something to evaluate. The same content phrased as a generic mission statement has nothing to evaluate, which is why it scores in the 2.5 to 3.5 range that gets a proposal rejected for budget reasons even if the science is genuinely good.

State-of-the-art treatment is the second place where Excellence scores diverge sharply. Weak proposals list adjacent literature and say their approach is "novel." Funded proposals identify a specific gap, name the leading approaches that have failed to close it, explain why they have failed, and locate their own contribution inside that gap. Citations should be recent, with the bulk within the past three to five years, include both academic and industrial sources where relevant, and reference any preceding Horizon 2020 or Horizon Europe projects whose results the consortium is building on. The CORDIS database is the right place to verify what has already been funded in adjacent territory; ignoring it produces an Excellence section that reviewers will read as either uninformed or strategically vague.

Methodology is where Innovation Actions and Research and Innovation Actions diverge in evaluator expectations. Research and Innovation Actions at TRL 2 to 6 are expected to show scientific rigour: hypothesis structure, controls, statistical power, replication plans, data governance. Innovation Actions at TRL 6 to 8 are expected to show engineering and validation rigour: pilot site selection, demonstration protocols, comparators, end-user testing methodology. In both cases the assessment that triggers a high score is whether the methodology section answers the question a sceptical reviewer would ask if they were trying to find the proposal's weakest point. Funded proposals anticipate that question and answer it explicitly.

Interdisciplinarity is increasingly required rather than optional. The 2026 to 2027 work programmes embed social sciences and humanities expectations across most clusters, particularly Cluster 1 Health, Cluster 5 Climate, Energy and Mobility, and Cluster 6 Food and Bioeconomy. A proposal that names a humanities or social science partner but assigns them token effort and no leadership of a work package will be scored down for tokenism. A proposal that genuinely integrates such expertise, for instance by giving a behavioural science team responsibility for a co-design work package whose outputs feed back into the technical workstream, will score on the dimension that purely technical consortia leave on the table.

Section 2, Impact: The Pathway, Not the Promise

The single biggest shift between Horizon 2020 and Horizon Europe, and the structural change that most often separates funded from rejected proposals in 2026, is the move from generic impact statements to the Key Impact Pathways framework. Horizon Europe defines impact along three dimensions (scientific, societal, economic and technological), each broken down into three pathways, for a total of nine. A funded Impact section addresses the dimensions relevant to its topic, not all nine, and does so through a structured causal chain: results, then outcomes, then impacts.

The distinction between these three terms is now enforced by evaluators rigorously. Results are the immediate, tangible outputs the project produces: a prototype, a dataset, a guideline, a trained cohort. Outcomes are what changes for direct target groups during or shortly after the project: adoption by a particular regulatory body, deployment in a specific industry segment, integration into clinical practice at named sites. Impacts are the longer-term societal, economic, or environmental shifts the outcomes contribute to: reduced emissions in a sector, improved survival in a patient population, lower costs in a public service. Proposals that conflate these three (and many do) lose a full point on Impact, which under tight competition is enough to push them below the funding line.

What evaluators want to see in 2026 is a pathway that is plausible step by step. Plausibility means baselines (where are we now), benchmarks (what counts as success at each stage), and assumptions (what has to be true outside the project for the pathway to hold). It also means named stakeholders. "Industrial partners" is vague. "Tier 1 automotive suppliers headquartered in Germany, France, and Italy who collectively control 62 percent of the European market for the relevant component" is specific and credible. Funded proposals secure letters of intent or support from named end users and reference those letters in the Impact section, not just in the appendix.

Dissemination, exploitation, and communication measures, often shortened to DEC, used to be where applicants padded the page with conferences, websites, social media plans, and journal targets. Under the 2026 template the page limits are tighter and the prescriptive guidance is reduced, which means evaluators read DEC catalogues more sceptically. A funded DEC section identifies one or two primary channels per stakeholder group, links each activity to a specific outcome it advances, and treats exploitation as a serious commercial or policy plan rather than a list of intentions. Where commercialisation is part of the route to impact, a credible business model is expected even at the prototype stage. Where policy adoption is the route, named policy processes (a specific EU directive review cycle, a particular national strategy renewal) carry far more weight than a generic reference to "informing EU policy."

The Impact section also has to address two requirements that originate elsewhere in EU strategy. The first is open science: every Horizon Europe project must commit to open access for peer-reviewed publications under Article 17 of the Grant Agreement, and a Data Management Plan outline is expected in the proposal, with a full DMP due six months after project start. The second is the requirement to discuss sex and gender variables in the research itself wherever scientifically relevant, particularly in health, social sciences, and any technology design that interacts with diverse user populations. This is not the same as team gender balance, which sits elsewhere; this is about whether the research itself is methodologically robust across the population it claims to serve.

Section 3, Implementation: Where Reviewers Look for Reasons to Doubt You

The Implementation section is the part of the proposal where reviewers stop being asked to believe and start being asked to verify. The architecture is fixed: a work plan, a consortium description, and a resources justification. A funded Implementation section organises its work into six to ten work packages, almost always including one for project management and one for dissemination, exploitation and communication. Fewer than six work packages tends to read as under-structured for a project lasting three to four years and consuming three to six million euros (the typical range for a Research and Innovation Action). More than ten tends to read as fragmented and difficult to govern.

Each work package has at least one milestone and one deliverable, and in most cases several of each. Funded proposals tend toward roughly three to five milestones for the whole project, not per work package, because once the grant agreement is signed those milestones become contractual. The same applies to deliverables: every deliverable listed in the proposal is a legal obligation, and inflating the count to look productive becomes a project management problem the moment the grant is awarded.

A Gantt chart is effectively mandatory. Reviewers do not have time to reconstruct a timeline from prose, and a clear visual schedule that fits on one or two pages, with work packages, tasks, milestones, and deliverables aligned to a project month axis, is the single most efficient way to communicate that the project is feasible. Funded proposals also tend to include a Pert chart or equivalent dependency diagram showing how work packages feed into each other. Reviewers use this to test whether downstream work packages have realistic inputs from upstream ones.

The risk section, often treated as an afterthought, is where many otherwise strong proposals lose a point. The expectation in 2026 is a structured register of technical, organisational, and external risks, each with a probability rating, an impact rating, and a specific mitigation linked to a work package or partner. Generic risks (the perennial "delays in recruitment" or "consortium communication challenges") with generic mitigations score badly. Project-specific risks, for instance "regulatory approval for the second pilot site may slip by six months if the national authority requires additional safety data, which would cascade into a delay in WP5 validation," with named contingencies score well.

The consortium description in section 3.3 is where the strongest proposals do something subtle. Rather than copying partner profiles from Part A, they describe the consortium functionally: what each partner contributes, why that contribution is necessary, where the expertise sits that no other partner provides, and how the partners' roles connect. Funded consortia avoid two characteristic failures. The first is geographic decoration, where partners are added from particular member states to satisfy a perceived political balance without a clear functional role. The second is single-partner work packages, where one organisation runs an entire work package alone, which contradicts the collaborative logic of Horizon Europe and reads as a thinly disguised subcontract. Each work package should have a clear lead and at least two or three contributing partners with distinct functions.

The resources justification translates everything above into euros. Funded proposals show realistic person-month allocations across partners (a coordinator typically takes more management effort than a regular partner, and that is expected), justify equipment and subcontracting against specific tasks, and apply the 25 percent indirect cost flat rate cleanly. Budgets that allocate effort evenly across partners regardless of their roles are a red flag for evaluators and a frequent reason for clarifications during grant agreement preparation.

The Consortium Logic Reviewers Actually Apply

Behind every Implementation score is a question the reviewer asks silently: if I had to bet that this exact group of organisations will deliver this exact work plan on this exact budget, would I bet yes. The collaborative minimum for a Research and Innovation Action or Innovation Action is three independent legal entities from three different EU Member States or Horizon Europe Associated Countries, but minimum compliance is not what produces strong scores. Funded proposals typically have eight to fifteen partners, calibrated to the breadth of the work, with a clear core team and a credible coordinator.

Coordinator track record matters disproportionately. The Funding and Tenders Portal and the CORDIS project database make every coordinator's history visible, and reviewers do look. A consortium led by a first-time coordinator with no prior framework programme experience is not automatically rejected, but it requires compensating evidence: a strong management plan, an experienced project officer, and ideally a co-coordinator or scientific lead with framework programme history. The same logic applies to widening countries (Member States with lower research and innovation performance). Their involvement is encouraged by the programme, with 58 percent of Horizon Europe collaborative projects now including at least one widening country partner, but a widening-country coordinator with limited track record needs a stronger consortium around them to score competitively.

Industrial involvement, where the call topic implies it, is assessed on substance rather than logos. A list of small and medium enterprises in the consortium counts for less than a clear exploitation pathway that explains how those companies will use the results commercially. Letters of support from end users, whether hospitals for a health project, grid operators for an energy project, or ministries for a policy project, carry weight when they reference specific deployments rather than generic interest.

Cross-Cutting Issues That Quietly Shape Your Score

Cross-cutting requirements do not have their own section, which is precisely why proposals lose points on them. Gender, ethics, open science, FAIR data, and social sciences and humanities integration are scored within the three main criteria, and failing to address them meaningfully depresses scores in Excellence (methodology), Impact (relevance to societal challenges), and Implementation (consortium and resources). A proposal that mentions these issues only in dedicated sub-paragraphs reads as compliance-driven. A proposal that integrates them into the substantive logic of the project reads as serious.

Open science deserves particular attention because it is now mandatory rather than aspirational. The Data Management Plan outline in the proposal needs to specify which datasets will be made open, under which licences, on which repositories (Zenodo, OpenAIRE, domain-specific platforms), and with what metadata standards. The FAIR principles (Findable, Accessible, Interoperable, Reusable) should be referenced explicitly. Proposals that treat data sharing as a constraint to be minimised score worse than proposals that treat it as a strategic asset for impact.

What Changed for the 2026 to 2027 Work Programmes

The European Commission's December 2025 revision of the standard application forms introduced several structural shifts that experienced grant writers are still adapting to. Page limits have tightened across action types, removing roughly five pages of room without removing any of the substantive requirements. Mandatory tags and certain prescriptive guidance have been removed from the template, which sounds like a relaxation but in practice gives evaluators more interpretive discretion, raising the cost of vagueness. Section 2.3 (the impact summary table) has become optional, which is a trap: applicants who skip it often lose the structural clarity it provided, while those who include it only when it adds genuine clarity score better.

Lump sum funding is now expected to cover approximately 50 percent of Horizon Europe projects by 2027, fundamentally changing how budgets and work packages are designed. Under lump sum, payments are released on work package completion rather than against actual costs, which puts pressure on the work package definitions in the proposal. Each work package becomes a payment milestone, so the work package boundaries have to be sharp, the completion criteria explicit, and the budget per work package defensible on its own.

A blind evaluation pilot has been extended to selected calls, where the first stage of evaluation is performed without consortium identities visible. Critics, including the independent observers who monitored the 2025 Cancer Mission evaluations, have argued that blind evaluation impairs assessment of methodological track record. Whether it expands further or contracts in 2027 remains under review, but where it applies, proposals have to communicate excellence without leaning on coordinator reputation.

The strategic priorities embedded in the 2026 to 2027 work programmes have also shifted. The reduction of Key Strategic Orientations from four to three signals consolidation around competitiveness, security, and the twin green and digital transitions. Proposals that recycle 2021 to 2024 narrative templates and "expected impact" language from earlier work programmes will read as out of step. The expected impacts in every Destination of every cluster have been rewritten for the current period, and proposals are expected to mirror that language closely.

The Quiet Architecture of a Top-Scoring Proposal

The funded Horizon Europe proposal does not look radically different from the rejected one at a casual glance. Both will be 40 pages, both will follow the template, both will contain a Gantt chart and a consortium table and a Data Management Plan outline. The differences are in the architecture beneath the surface.

A funded proposal is internally consistent. The objectives in section 1.1 reappear, restated in operational terms, in the work packages in section 3.1. The results listed in the Impact pathway map onto specific deliverables in specific work packages. The risks in section 3.1 are addressed by mitigations that show up in the resources allocated to the relevant partners. The cross-cutting requirements thread through methodology, work plan, and consortium rather than sitting in isolated boilerplate paragraphs.

A funded proposal speaks the language of the call. The expected outcomes and impacts listed in the work programme Destination text appear, almost verbatim, in the proposal's Impact section, and are then traced back to the project's specific results. Evaluators are scoring fit to the call, not just intrinsic quality.

A funded proposal is written in a single voice. Consortium-written proposals, where each partner drafts their own section and the coordinator stitches them together, are easy to spot and difficult to score highly. A funded proposal almost always has a single lead writer, sometimes a coordinator, often a dedicated grant writer, who takes partner inputs and rewrites them into a unified narrative with consistent terminology, consistent emphasis, and a single argumentative spine.

Finally, a funded proposal anticipates the grant agreement. Every milestone, every deliverable, every work package is something the consortium intends to deliver and can deliver. Once the proposal is funded, its text becomes the Description of Action annexed to the grant agreement. Proposals written to impress evaluators but not to be executed produce projects that struggle from month one. Proposals written to be executed produce projects that are also easier to evaluate, because the logic is the same logic that will govern the next four years of work. That alignment between persuasion and execution is the single most reliable signal of a fundable proposal, and it is the architecture that every Horizon Europe applicant in 2026 and 2027 should be building toward.